This is my personal digital portal. I am into wireless technology, standardization and passionate about programming. Posts in my portal are explicitly personal opinion and is not endorsement of my current or previous employers.

The portal is the home for my blog posts and articles in addition to those that I usually post on #LinkedIn, Medium or twitter.

Sendil Kumar

From a communication engineer’s perspective !!, Some thoughts and collection of responses and info from web

From a communication engineer’s perspective !!, Some thoughts and collection of responses and info from web

The views expressed in this article are purely personal and do not necessarily reflect the views of any organization. -

Digital Twin is digital replica of real-world (or atleast few parts of it) with geometry, physical parts

Digital twins (DT) have emerged as a powerful technology with applications across various industries. Research and development efforts are surging, along with real-world adoption in gaming, manufacturing, and even government initiatives for smart city infrastructure. It has also got attention by the ITU 1; leading to few recommendations (See ITU-T Y.3090) and focus groups.

One might consider digital twins an advanced evolution of the metaverse concept, which focuses on user interaction within a virtual world. However, digital twins offer a distinct set of capabilities.

And metaverse itself has been there for few years in the Gaming industry. For e.g. the well-known multiplayer game PUBG has a shared virtual world, where multiple players interact in real-time. Recall the Microsoft Flight Simulator game, where various landmarks, airports and runway are preloaded and the server provides real-life data and information about flight that are overlayed on the game. These geographical information of locations are typically only the environments and gives a good visual appeal. Where as the simulated flight itself includes various physics an interaction with the console and controls.

When we advance towards digital twin (DT), the real-world objects (static as well as moving), environment/topography with some level of precision (position) are synchronically represented and updated in the digital space. This may sound initially as good as live video stream of a location or a factory floor, but it is not. The digital twin is more of data-driven representation of physical entities. These physical entities are represented with good geometric and functional approximation, it may even include other properties like the material, weight and various states.

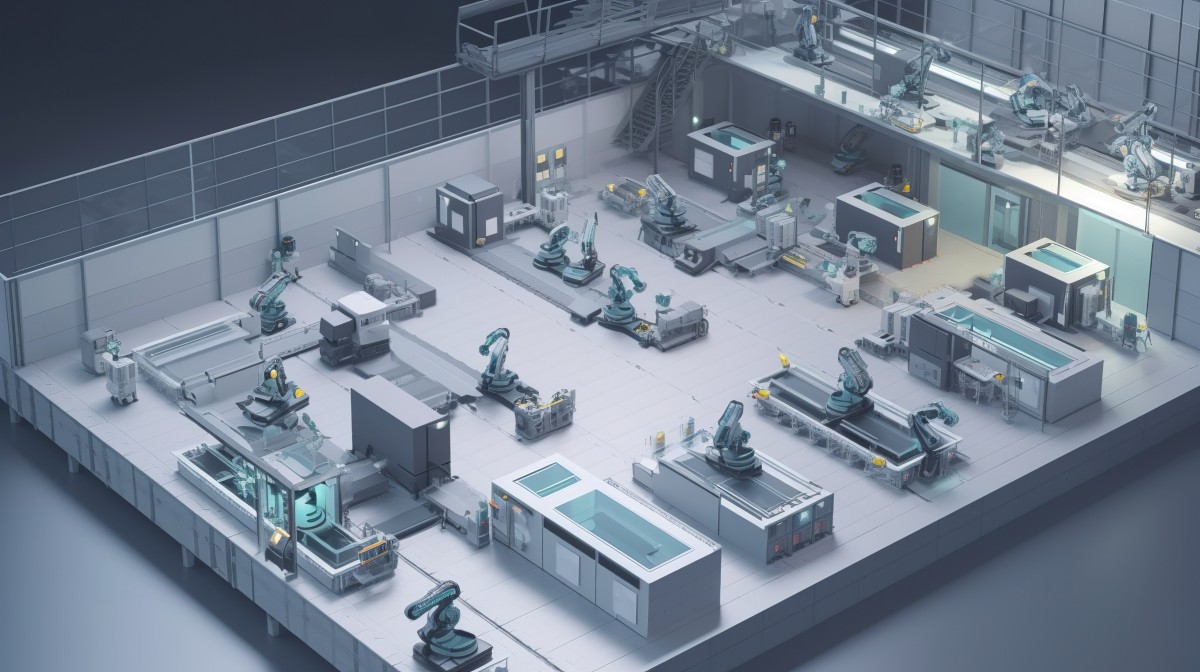

Take, for example, a sophisticated manufacturing plant (see figure), the DT would encompass every machine, its properties (material, weight), and real-time operational state. Crucially, these digital objects can be interacted with programmatically, allowing for simulations, optimization, and remote control.

If we need to scale and DT has to become mainstream by 2030, here are the key aspects to be considered. Following sections are based on my survey on the aspects like standards, physics model, requirements on network communications, cloud operations etc.,

Are there Industry standards and specifications to create digital twin to store,share and render over multiple devices ?

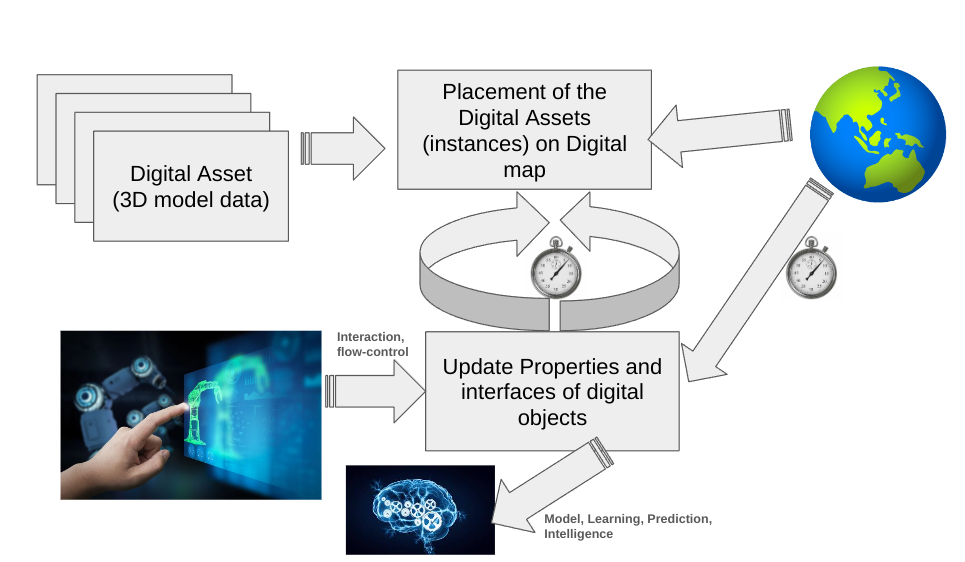

Standardized specifications have an important role - representing the Digital Assets (reusability, large scale adoption) , representation of digital-twin with relative positioning of the assets in the digital twin, and continous update of the states of these assets from real-world (through various connectivity network), accessible through various cloud applications to learn, model and validate various aspects mechanical assembly, physics engine and even fluid mechanics simulations. {#main-container}

To visualize the Digital assets, over various device and client interfaces, there are various industry initiatives.

Choosing the right standards and specifications depends on several factors:

A successful multi-device digital twin project requires a well-defined information model that clearly outlines the data and functionalities required in the digital twin.

The industry is constantly evolving, so staying updated on emerging standards and tools is essential for creating effective digital twins accessible across multiple devices.

There isn’t a single, unified standard for creating digital twins and rendering them across multiple devices. However, there are several industry practices and specifications that play a crucial role

As mentioned earlier, one of the crucial component of Digital Twin is to continously monitor and reflect the states of various objects, environments from the real-world in the DT. For e.g., in DT of a factory floor, states and process of different machines, position of the AGVs etc., could be key. For an city infrastructure; traffic congestion, movements of vehicles, environmental sensors may be essential. These sensors and devices need to communicate with the DT server to send event-driven or periodic data. In most cases, not all sensors/devices use the same connectivity platform. They may be geographically distributed, or even limited by its capabilities like power, form factor etc. The DT server also need to propage these events/states to the connected clients to facilate rendering.

There are several approaches for propagating events (changes) in a digital twin to client device/platform, depending on the chosen architecture and communication protocols. Here are some common methods:

1. Publish-Subscribe (Pub/Sub) Messaging: A central message broker acts as a hub. The digital twin core (server-side) publishes event messages whenever something changes (e.g., sensor data update, equipment status change). Rendering clients subscribe to specific topics or channels relevant to them. When a new event message is published, the message broker pushes it to all subscribed clients.

2. Real-time APIs: The digital twin core exposes APIs that rendering clients can call to fetch the latest state or specific data points. Clients can continuously poll the APIs at a set interval to check for updates. Alternatively, server-push mechanisms within the API can be implemented where the server pushes updates to subscribed clients when changes occur. This method gives clients more control over data retrieval but requires more development effort.

3. WebSockets: These persistent, two-way communication channels enable real-time data exchange between the server and clients. The digital twin core can initiate a WebSocket connection with clients and then push event messages whenever something changes in the simulation. Clients can also send messages back to the server, enabling interactive features within the digital twin.

4. Cloud-Based Solutions: Some cloud platforms specifically designed for digital twins offer built-in functionalities for event propagation. These platforms handle communication between the server-side logic and rendering clients, often using a combination of Pub/Sub or WebSockets under the hood. This approach simplifies development but might introduce vendor lock-in.

Choosing the right approach depends on factors like:

Regardless of the chosen method, it’s important to ensure efficient data transmission and minimize latency (delay) for a smooth user experience in the rendering clients.

What if two remote users interact with the Digital Twin ? e.g. Rendering and state synchronisation between two clients and the server

Synchronizing two clients and the server in a multi-user digital twin experience is crucial to maintain consistency in the visualized data. For e.g. especially if the two users are in two different wireless environment, cells or even technology. I found these approaches being used in DT industry.

Additional Considerations:

Network Latency Management: Network delays can cause inconsistencies in real-time scenarios. Techniques like client-side prediction and interpolation can be used to smooth out visual updates while waiting for server confirmation. Especially, 5G networks have capabilities to define expected QoS for specific data pipe, referred to as Network Slice.

Data Compression: Depending on the complexity of the digital twin data, compression techniques can be used to reduce network bandwidth usage during synchronization.

By implementing these strategies, one can maintain a consistent and synchronized experience for all clients viewing the digital twin, even when working on different devices or with slight network delays.

Digital Twin is not just for creating a snapshot of the real-world environment and state of a system, but also replay, learning models and validate system physics, simulation of rigid3 and soft4 body mechanics. Most promising aspiration from a digital twin is to predict, prevent failures or take necessary actions in the real world through actuators.

Modelling and computational work-load in a digital twin can be implemented in a few different places depending on the architecture and complexity of the system. Here are the common scenarios:

Server-side Simulation: The core logic and physics engine reside on the server. Sensor data from the real-world counterpart (physical asset) is streamed to the server. The server-side physics engine simulates the behavior of the physical asset based on the received data and the implemented physical laws. The simulation outputs (e.g., updated sensor values, equipment status) are then sent to the rendering clients for visualization. This approach is advantageous for complex simulations requiring significant computational power or when dealing with sensitive data that cannot be exposed to client-side devices. However, it can introduce latency between real-world changes and their reflection in the visualization.

Client-side Simulation with Server-side Synchronization: Here, a lightweight physics engine might be integrated into the rendering client for real-time responsiveness. The client receives the initial state and data from the server. It then performs its own simulation based on the received data and a simplified physics model. This allows for smoother visualization and user interaction within the client. However, to maintain consistency, the client continuously synchronizes with the server. It sends its simulation updates to the server, and in return, receives corrections or confirmations to align with the authoritative server-side simulation. This approach offers a balance between responsiveness and accuracy, suitable for less complex simulations or when low latency is critical.

Hybrid Approach: A combination of server-side and client-side simulation can be implemented. The server handles the core simulation logic and complex physics calculations. Clients perform simpler physics tasks or visualizations based on the data received from the server. This approach leverages the strengths of both architectures, allowing for efficient resource utilization and tailored experiences for different clients.

The choice of where to implement physics modelling depends on several factors:

The specific physics aspects simulated in a digital twin of a machine or environment depend on the complexity and the intended use of the digital twin. Once can create a digital twin that accurately reflects the relevant physics of the machine, environment (for urban Infra) to evaluate valuable insights and predictions about the behavior in the real world. One should be able to predict potential events; based on the training from these data-sets. Develop correlations of wide-spread events of the real-world.

Ultimately, the goal is to achieve a balance between accuracy, performance, and a seamless user experience for interacting with the digital twin.

Cant stop imagining the requirements that Digital Twin can impose on wireless networks of the future !!

Quick glance on the elements of DT that impacts N/W design - bandwidth requirements for sending digital twin assets, rendering and events streaming:

Size of Assets: Complexity of 3D models directly impacts file size. High-fidelity models with intricate details require more bandwidth compared to simpler models. Techniques like level-of-detail (LOD)^[LOD https://www.cgspectrum.com/blog/what-is-level-of-detail-lod-3d-modeling ] can be used to adjust model complexity based on viewing distance, reducing data sent at farther zooms.

Textures and Materials: High-resolution textures and complex materials increase file size and bandwidth to transfer. Using texture compression techniques and optimizing material properties for efficient transmission.

Frequency of Events: The number of updates sent per unit time significantly affects network bandwidth usage of both sensors as well as at the rendering clients. For highly dynamic simulations with frequent sensor data changes along with user interactions, bandwidth requirements will be much higher.

Data Encoding and Compression: Choosing efficient data formats plays a crucial role. Standardized formats like glTF often incorporate compression mechanisms to reduce file size without sacrificing quality significantly. Additional compression techniques can be applied during transmission depending on the chosen communication protocol (wired, wireless).

Network Conditions: Bandwidth availability and latency (delay) on the connection between server and clients impact how much data can be sent effectively. Lower bandwidth or high latency might necessitate reducing data complexity or implementing strategies like data chunking for smoother transmission.

Every industry is assessing the application and usability of Digital Twin and the essential information needed to accurately represent the real-world scene and metrics. There is a big challenge to provide sufficient connectivity to such compute machines, rendering machines and also stream sensing of the environment and states of possible functional metric of a machines or infrastructure.

Industries are embracing Digital Twins - Businesses across various sectors are recognizing the potential of Digital Twins to create virtual representations of physical assets and processes. This allows for better monitoring, analysis, and optimization.

Accurate Representation is Crucial: For a Digital Twin to be truly valuable, it needs accurate and timely data reflecting the real-world scenario. This data includes metrics like sensor readings, equipment states, and environmental conditions.

Therefore, the challenge lies in establishing a robust and scalable network infrastructure that can handle the continuous flow of data between these various elements. This includes addressing issues like:

Electromagnetism, electrical components, electromagnetic simulations.

Digital Twin - https://www.itu.int/cities/dt-resource-hub/digitaltwin/ ↩

Few known ones - Sketchfab, GrabCAD,TurboSquid,Thingiverse are few such repositories. Some industrial automation software providers offer libraries of pre-built models for specific equipment types (e.g., valves, pumps, robots) used in their platforms. Manufacturing consortia repositories. ↩

Rigid Body Mechanics simulation includes the motion (linear, angular) and interaction of solid objects within the machine, forces and torques (including gravity, motor forces) and resulting torques causing rotations. Object collisions including aspects like elasticity, friction, and energy transfer during impacts. Fluid dynamics for machines involving fluids (e.g., pumps, turbines, hydraulic systems), computational fluid dynamics (CFD) movement of fluids considerinig factors like pressure, viscosity, and flow rates and interacting with solid parts, affecting forces and potentially causing vibrations or deformations. Thermodynamics, Temperature distribution ↩

Simulating flexible components like belts or hoses, soft body physics can be employed. ↩